What is robots.txt?

WordPress robots.txt file is a simple method used by websites to connect with web crawlers and other web robots.

Robots.txt tells search engines which contents or articles from the website should be indexed and what should not be indexed.

A robots file says search engines what content you don’t want to index.

Robots.txt file is a simple text file which is very important for your sites search engine optimization.

If you want to check your robots.txt file. Simply, Go into your browser and type yourdomain.com/robots.txt inside the URL box. What have you seen?

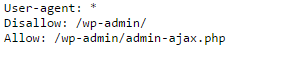

Mostly, It has an automatic robots.txt file. Maybe something like this.

You can also edit this file by some WordPress plugin directly by the dashboard. I would hardly suggest you Yoast SEO plugin.

And install then activate this plugin in your dashboard.

You can also directly search this plugin in your Dashboard> plugins> addnew> write SEO Yoast in the search box. After activation of this plugin.

Go to your dashboard and click on SEO in your left sidebar options. Like go to SEO> tools> file editor> edit your robots.txt file and save changes.

If you want to make this file manually. Simply go to notepad and edit same like above file.

Now go to your Cpanel file manager or FTP file manager and upload this file in root directory.

If those above lines write on Robots.txt file it’s allowed Google bot for index every page of your site.

But folder wp-admin/ of root directory doesn’t allow for indexing. That means Google bots won’t index wp-admin/ folder.

This means you can easily hide any of your file or web content from Google to show it in search results.

Now, It’s necessary to know that some bots will not see at your robots.txt file and index your page anyway.

They can just ignore your robots.txt file security. That’s why you also need to add another way of security to your web pages that you don’t want to be indexed by making them no index and no follow.

So that, Another way to make sure that your personal pages or double posted pages will not crawl in the index at all is to also add the no follow tag. For doing this,

Go to your WordPress dashboard>

Go to Plugins >

click on add new >

Write GD Press in search box>

Click on search plugins.

You will get the first result. Now install that plugin and activate it. Now, Go to dashboard again. Go to that plugin.

Uncheck the use global meta tag settings, and you have to choose no index to follow.

It will make sure that search engines like google will not index your robots.txt file or your disallowed pages.

This is like a two-factor double security for your web pages.

Finally, you may also check your blocked URLs in webmaster tools. Go to your webmaster dashboard> crawls> Crawl errors. You will see your all pages denied by robots.txt file.

That’s all Guys, It was the off page search engine optimization your robots.txt file. make your robots file and do noindex your pages as your need.

Hi there, its pleasant paragraph about media print, we all be

aware of media is a enormous source of information.